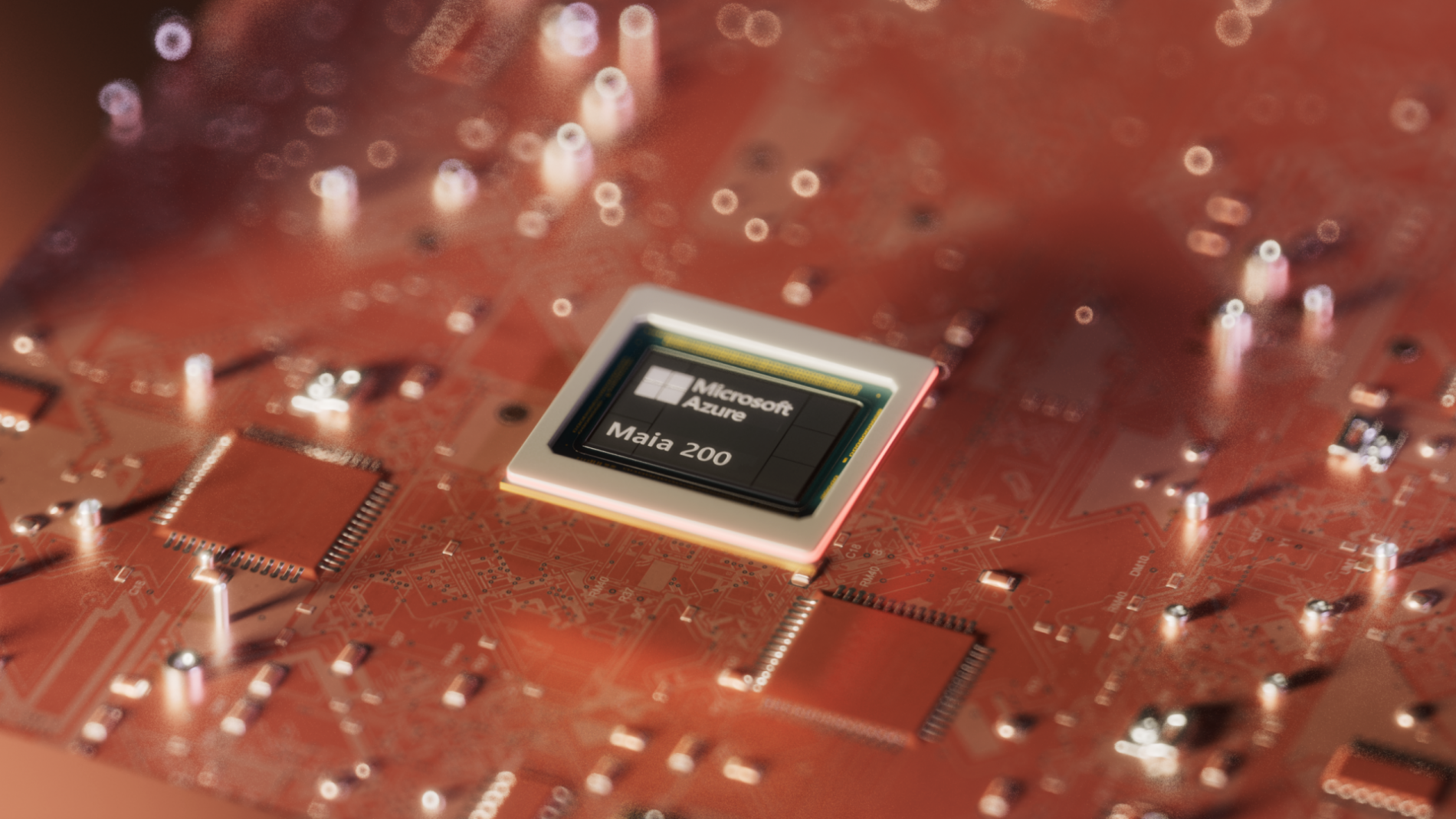

The Maia 200 marks Microsoft’s strategic entry into the high‑performance AI accelerator market, positioned as a direct counter to NVIDIA’s GPUs and Google’s TPUs.

Engineered as a custom AI coprocessor, the Maia 200 targets large‑scale cloud and edge workloads where throughput, efficiency, and software integration are critical.

Built around Microsoft Azure’s infrastructure requirements, it is designed to accelerate training and inference for generative models, large language models, and vision systems.

Its architecture reflects lessons learned from years of hyperscale deployment, balancing raw compute with power envelope constraints and thermal management.

By offering a tightly integrated path from Azure services to on‑chip primitives, the Maia 200 aims to reshape how enterprises consume AI‑driven compute.

Read More: Tech UAE

Core Architecture of the Maia 200

The Maia 200 employs a 5 nm multi‑die configuration that combines a central control unit with four parallel tensor‑optimized clusters.

Each cluster integrates a vectorized compute fabric, high‑bandwidth SRAM tiles, and mesh‑style interconnects optimized for low‑latency data movement.

Tasks are dispatched by an intelligent scheduler that considers job priority, latency SLAs, and energy targets.

This allows the Maia to handle mixed‑workload scenarios such as batch training, online inference, and real‑time analytics on the same silicon.

A defining characteristic of the Maia 200 is its adaptive clocking and voltage‑scaling system.

For inference‑heavy workloads, the chip can operate at lower frequencies while maintaining high utilization through wide vector pipelines.

During training phases that demand higher throughput, clocks can ramp up to 3.4 GHz while remaining within defined power budgets.

As Microsoft’s senior AI hardware architect remarked,

“The Maia 200 is built to match the dynamic nature of modern AI workloads, not just peak TOPS.”

Maia 200 Performance Configuration Table

| Mode | Peak Compute (TOPS) | Power Envelope (Watts) | Thermal Design Power (°C) |

|---|---|---|---|

| Base Training Mode | 520 TOPS | 220 W | 78°C |

| Inference Mode | 380 TOPS | 160 W | 72°C |

| Eco‑Efficiency Mode | 240 TOPS | 95 W | 65°C |

These configurations illustrate how Maia can be tuned for different operational profiles.

Base Training Mode suits large‑scale distributed training clusters within Azure.

Inference Mode targets latency‑sensitive services, while Eco‑Efficiency Mode supports edge deployments and scale‑out environments.

Maia 200 in Azure and Edge AI Environments

The Maia-200 is deeply integrated into Microsoft Azure, where it powers AI‑optimized virtual machines and dedicated inference endpoints.

In cloud data centers, multiple Maia-200 tiles can be ganged together into larger AI pods, delivering exascale‑level capabilities.

Workloads such as language model training, multimodal reasoning, and probabilistic forecasting see measurable reductions in iteration time.

From the operator’s perspective, the Maia 200 presents a familiar API surface, hiding much of the underlying hardware complexity.

At the edge, the Maia 200 is packaged into compact accelerator modules that slot into Azure Stack Edge and similar appliances.

These units enable real‑time processing of video feeds, sensor telemetry, and industrial signals without round‑trips to the core cloud.

In manufacturing and logistics, the Maia 200 can detect anomalies in production lines or monitor equipment health in real time.

For retail and smart‑city deployments, it supports visual analytics, occupancy tracking, and time‑series pattern recognition.

Connectivity, Networking, and I/O on the Maia 200

Even the most powerful accelerator is constrained by its I/O substructure.

The Maia-200 integrates dual 100 Gbps Ethernet ports, four PCIe 5.0 x4 lanes, and eight NVMe 4.0‑compatible storage interfaces.

These links support high‑bandwidth data movement between CPU hosts, memory pools, and storage subsystems.

Flexible queue‑management engines and traffic‑shaping policies ensure that latency‑sensitive AI inferencing is not starved by background data flows.

Flow‑control mechanisms prevent congestion while maintaining predictable response times.

On the storage side, the Maia-200 supports up to 1 TB of high‑bandwidth memory and 16 TB of NVMe storage.

This configuration enables rapid loading of model weights, cache re‑use of intermediate tensors, and fast checkpointing.

Data redundancy and parity‑based schemes protect against storage‑level failures, enhancing system reliability.

From an operational standpoint, these I/O capabilities allow the Maia-200 to interoperate with existing storage and fabric infrastructures.

Key Advantages of Maia 200 I/O Design

- Dual 100 Gbps Ethernet for high‑speed interconnectivity.

- PCIe 5.0 support for low‑latency peripheral expansion.

- Up to 1 TB of high‑bandwidth memory for real‑time data.

- NVMe 4.0‑compatible storage for fast access and low latency.

- Integrated QoS and traffic‑shaping for predictable latency.

Software, Ecosystem, and Developer Experience on the Maia 200

The value of the Maia-200 is reinforced by its software ecosystem, which includes Azure‑native SDKs and runtime environments.

Developers can write in Python, C++, and Rust while leveraging higher‑level AI frameworks such as PyTorch and ONNX.

Microsoft provides optimized backends that translate standard model graphs into Maia‑specific instructions, preserving compatibility.

Pre‑built inference engines and vision pipelines accelerate deployment of common AI patterns without requiring low‑level programming.

Orchestration and deployment are managed through declarative YAML manifests and Azure‑specific tooling.

Operators define compute profiles, resource constraints, and update policies, while Kubernetes handles scheduling and scaling.

Automated canary rollouts and rollback mechanisms reduce the risk of introducing regressions.

The Maia 200 runtime exposes telemetry streams that report latency, memory usage, and utilization metrics per workload.

Security features include hardware‑enforced isolation, secure boot, and attestation capabilities.

Typical Maia 200 Developer Workflow

- Choose an Azure AI‑optimized virtual machine powered by the Maia 200.

- Deploy models via standard frameworks with Maia‑aware backends.

- Fine‑tune performance using profiling tools and utilization dashboards.

- Scale horizontally across multiple Maia-200 nodes as demand grows.

- Monitor health, costs, and utilization through Azure portal analytics.

Reliability, Lifecycle, and Competitive Positioning of the Maia 200

Reliability is a cornerstone of the Maia 200, especially in mission‑critical AI deployments.

Redundant power supplies, hot‑swappable components, and fault‑tolerant interconnects ensure that services remain available during component failures.

Telemetry data from sensors and counters is aggregated into predictive health models.

These models correlate temperature, power draw, and error rates to forecast degradation and schedule maintenance.

Lifecycle management is handled through Microsoft’s centralized update and firmware platform.

Updates are rolled out in stages, first tested on a small subset of nodes before broader deployment.

Each Maia 200 reports its compatibility status and readiness for new releases, enabling smooth upgrades.

The platform is designed to remain viable for 5–7 years of active service, aligning with Azure’s hardware refresh cycles.

In the broader landscape, the Maia 200 is Microsoft’s deliberate response to NVIDIA’s GPU dominance and Google’s TPU ecosystem.

By tightly integrating hardware with Azure services, it offers a differentiated path for enterprises that want vertically aligned AI stacks.

For developers and operators, the Maia 200 represents a powerful, scalable, and maintainable option for building next‑generation AI services.

In closing, the Maia 200 underpins Microsoft’s vision for an AI‑native cloud and edge, competing head‑on with NVIDIA and Google.

Through its balanced architecture, efficient software stack, and deep Azure integration, the Maia 200 delivers a compelling alternative for modern AI workloads.

Leave a Reply

Your email address will not be published. Required fields are marked *